Introduction

A Job Scheduler is a system where a client defines a job with a schedule and payload, the system stores the definition, triggers the job at the scheduled time, dispatches it to a worker, and tracks the execution result. For recurring jobs, the system computes the next run time and re-schedules automatically. The job moves through states: pending, running, succeeded, failed, retrying.

The system handles secure multi-tenant scheduling, performs resource-aware dispatching across worker pools, enforces fair access to shared compute capacity, and maintains durable audit trails of every job definition change and execution attempt.

Functional Requirements

We extract verbs from the problem statement to identify core operations:

- "defines" a job and "stores" the definition → CRUD operation (Job Management)

- "triggers" at scheduled time and "dispatches" to worker → SCHEDULE + DISPATCH operation (Scheduling & Dispatch)

- "tracks" execution and "moves through" states → STATE TRACKING operation (Execution & Status)

- "enforces" access control and "maintains" audit trails → AUTH operation (Access Control & Auditing)

Each verb maps to a functional requirement. Each requirement builds on the previous, progressively adding components to the architecture.

-

Clients can create, update, and delete job definitions. Each job specifies a schedule (one-time or cron), executable reference, environment version, payload, resource profile, priority, timeout, and retry policy.

-

The system scans for jobs whose scheduled time has arrived, creates Run records with pinned versions, and dispatches them to workers via a durable task queue.

-

Workers report execution progress and results. Clients can query job status, list runs with filters, and fetch detailed attempt history.

-

The system enforces multi-tenant isolation with read/write/admin permission levels. All control-plane actions are logged in an immutable audit trail. Secrets are managed through external vault references.

Scale Requirements

- 1,000 job creations per second (think: multi-tenant SaaS platform)

- 50,000 concurrent job executions across all tenants

- Scheduling jitter (delay between scheduled time and actual dispatch): p95 under 1 second for one-time jobs, p95 under 5 seconds for cron jobs

- Millions of job definitions across 10,000+ tenants

- 7-day run history retention for active queries; 90-day cold archive

Non-Functional Requirements

We extract adjectives from the problem statement to identify quality constraints:

-

"secure" multi-tenant scheduling → Strict tenant isolation; read/write/admin permission separation; audit trail for all control-plane actions

-

"resource-aware" dispatching → Resource profiles must map to actual capacity; admission control prevents overcommit

-

"fair" access to compute → Per-tenant quotas and per-queue fairness; prevent noisy-neighbor starvation

-

"durable" audit trails → Immutable logs of job changes, ACL modifications, manual triggers, and cancellations

-

"predictable" execution → Scheduling jitter within SLA targets; versioned executables for reproducible runs

-

Security: Strict tenant isolation with read/write/admin permission separation. Audit all control-plane actions (create, update, delete, pause, trigger, cancel, ACL changes). Schedulers execute powerful workloads — unauthorized writes can deploy arbitrary code.

-

Performance: Scheduling jitter p95 under 1 second for one-time jobs, p95 under 5 seconds for cron. Sustain 1,000 job creations/sec and 50,000 concurrent runs.

-

Fairness: Per-tenant concurrency quotas and per-queue weighted fair scheduling. Priority influences ordering but lower-priority tenants are never starved indefinitely.

-

Observability: Execution metrics (run duration, queue depth, scheduling lag), alerting on SLA breaches, dashboards for capacity utilization. The system must measure what it promises.

Data Model

The data model is derived from extracting nouns in the problem statement:

- "job" and "schedule" → Job entity with schedule_type, schedule_expr, next_run_at

- "execution" and "run" → Run entity pinning versions and tracking status

- "attempt" and "retry" → Attempt entity classifying exit reasons

- "payload" and "executable" → Job fields executable_ref, env_ref, payload

- "resource profile" → Job field resource_profile for dispatch constraints

- "tenant" → tenant_id on Job and Run for partition isolation

The three-tier model (Job → Run → Attempt) separates the definition from each execution, and each execution from its individual tries. This enables version pinning per run and failure attribution per attempt.

Job

The definition of a scheduled task. Contains the schedule, what to execute, resource requirements, and retry policy. A Job is a template — each execution creates a Run.

Run

A single execution instance of a Job. Created when the scheduler triggers a job. Pins the executable and environment versions at creation time for reproducibility.

Attempt

A single try within a Run. Each retry creates a new Attempt. Records the worker, duration, exit reason, and failure classification.

Job and Run have a one-to-many relationship. A Job has many Runs over time. Each Run belongs to exactly one Job.

Run and Attempt have a one-to-many relationship. A Run has one or more Attempts (initial + retries). Each Attempt belongs to one Run.

API Endpoints

We derive API endpoints from the functional requirements (verbs identified in Step 0):

- CREATE: "defines a job" → POST /jobs

- UPDATE: "updates a job definition" → PATCH /jobs/{id}

- DELETE: "deletes (soft) a job" → DELETE /jobs/{id}

- ACTION: "triggers an immediate run" → POST /jobs/{id}:runNow

- ACTION: "cancels a job or run" → POST /jobs/{id}:cancel

- ACTION: "pauses/resumes scheduling" → POST /jobs/{id}:pause, POST /jobs/{id}:resume

- READ: "queries job status" → GET /jobs/{id}

- READ: "lists run history" → GET /jobs/{id}/runs

- READ: "fetches a specific run" → GET /runs/{run_id}

- ACL: "grants permissions" → PUT /jobs/{id}/acl

/jobsCreate a new job definition with schedule, payload, executable reference, and resource profile.

/jobs/{id}Update job definition fields (schedule, payload, resource profile, retry policy, executable/env refs).

/jobs/{id}Soft-delete a job. Marks as CANCELED and stops future scheduling. Preserves run history for audit.

/jobs/{id}:runNowManually trigger an immediate run outside the normal schedule.

/jobs/{id}Get job definition and latest run state.

/jobs/{id}/runs?status=FAILED&from=2024-01-10&to=2024-01-15List runs for a job with optional filters on status, time range, and pagination.

/jobs/{id}/aclSet access control policy for a job. Grants read, write, or admin permissions to principals.

High Level Design

1. Job Management (CRUD)

Clients can create, update, and delete job definitions. Each job specifies a schedule (one-time or cron), executable reference, environment version, payload, resource profile, priority, timeout, and retry policy.

A data engineer needs to set up a nightly sales report. They call the API with a cron expression, a container image reference, and resource requirements. The system stores the definition and computes when the job should first run. Let's build this step by step.

The Problem

Job definitions must be durably stored — losing a job definition means a scheduled task silently stops running. The system needs to validate inputs (is the cron expression valid? does the resource profile exceed quotas?), compute the next run time, and make the definition available to the scheduler.

Step 1: Job Service

The Job Service handles all CRUD operations. When a client sends POST /jobs, the service:

- Validates the request: checks cron syntax, verifies the executable reference exists, confirms the resource profile is within the tenant's quota.

- Computes

next_run_atfrom the schedule expression. For cron jobs, this is the next matching time. For one-time jobs, it's the specifiedrun_attimestamp. - Writes the job definition to the Job Database with status

ACTIVE. - Returns the job ID and computed

next_run_atto the client.

For updates (PATCH /jobs/{id}), the service applies partial updates and recomputes next_run_at if the schedule changed. For deletes (DELETE /jobs/{id}), the service sets status to CANCELED — a soft delete that preserves the definition and run history for auditing. Hard deletes are avoided because a scheduler might be running through jobs from a previous scan.

Step 2: Job Database

The Job Database stores all job definitions. The critical index is on (status, next_run_at) — this is what the scheduler will scan to find due jobs. We partition by tenant_id for query isolation, so one tenant's queries don't affect another's performance.

A relational database works well here. Job definitions are structured data with well-defined relationships (Job → Run → Attempt). The write rate (roughly 1,200/sec) is comfortably within what a single primary with read replicas can handle.

The architecture at this stage: the Client sends REST requests through the API Gateway to the Job Service, which validates and persists job definitions in the Job Database. This is the foundation — we have durable job storage with computed next run times. The next requirement adds the scheduler that reads these definitions and dispatches work.

2. Scheduling & Dispatch

The system scans for jobs whose scheduled time has arrived, creates Run records with pinned versions, and dispatches them to workers via a durable task queue.

Job definitions are stored. But nothing runs yet. We need a component that wakes up periodically, asks "which jobs are due right now?", and sends them to workers. This is the core scheduling loop.

The Problem

The naive approach: a single scheduler process runs a loop every second, queries SELECT * FROM jobs WHERE next_run_at <= NOW() AND status = 'ACTIVE', and dispatches each result. This works at small scale. But consider midnight UTC, when thousands of cron jobs with 0 0 * * * all become due simultaneously. The query returns 10,000 rows. The scheduler must create 10,000 run records, enqueue 10,000 tasks, and update 10,000 next_run_at values — all within a second to meet our jitter SLA. One process cannot keep up.

Step 1: Time-Bucket Scanning

Instead of querying all due jobs at once, the scheduler divides time into discrete buckets (say 5-second windows). Each tick, the scheduler processes one bucket: "Give me all jobs with next_run_at between 12:00:00 and 12:00:05." This bounds the work per tick — even if 10,000 jobs fire at midnight, the scheduler processes them across multiple buckets rather than in one query.

The scheduler advances bucket by bucket. If it falls behind (processing takes longer than the bucket width), it catches up by processing multiple buckets in sequence. The jitter SLA (p95 under 5 seconds for cron) gives headroom for catch-up.

Step 2: Creating Run Records

For each due job, the scheduler creates a Run record. This is where version pinning happens: the run captures a snapshot of the job's executable_ref, env_ref, and payload at creation time. If the job definition is updated later, in-flight runs and their retries still use the original versions.

The scheduler also checks the job's overlap policy. If a cron job's previous run is still active:

- SKIP (default): Drop this tick. Don't create a new run.

- QUEUE: Create the run but mark it as waiting for the previous run to complete.

- ALLOW: Create the run and let both execute concurrently.

After creating the run, the scheduler computes the job's next_run_at for the following cron tick and updates the job record.

Step 3: Task Queue

The scheduler doesn't execute jobs directly. It enqueues each new run into a durable task queue. Workers pull from this queue, ensuring at-least-once delivery. If a worker crashes mid-execution, the message returns to the queue and another worker picks it up.

Why a queue instead of direct dispatch? Decoupling. The scheduler focuses on "what should run when." Workers focus on "execute this task." The queue absorbs bursts — if 10,000 cron jobs fire at midnight, the queue buffers them and workers drain at their own pace.

(For background on durable queues and at-least-once delivery, see Message Queues.)

Step 4: Worker Execution

Workers pull tasks from the queue, start execution in an isolated environment (container or sandbox), and report results back. Each worker:

- Pulls a task from the queue.

- Acquires a lease — a time-limited lock that tells the scheduler "I'm working on this." The lease prevents another worker from picking up the same task.

- Sends periodic heartbeats to extend the lease. If heartbeats stop (worker crashed), the lease expires and the scheduler re-enqueues the task.

- Executes the job in an isolated container using the pinned executable and environment refs.

- Reports the result: exit code, duration, logs reference.

The architecture adds three components. The Scheduler Service periodically scans the Job Database for due jobs and creates Run records. It enqueues runnable tasks into the Task Queue. Workers pull from the queue, execute jobs in isolated containers, and report results. The Job Service, API Gateway, and Job Database from FR0 remain unchanged.

3. Execution & Status Tracking

Workers report execution progress and results. Clients can query job status, list runs with filters, and fetch detailed attempt history.

Jobs are being scheduled and dispatched to workers. But clients have no visibility — they can't tell if a job ran successfully, when it started, how long it took, or why it failed. We need execution tracking and a query interface.

The Problem

Workers execute jobs, but without structured reporting, operators are blind. Did the nightly report run? Did it succeed? If it failed, was it a code bug or an infrastructure issue? How many retries happened? This visibility is critical for both job owners (debugging their code) and the platform team (monitoring system health).

Step 1: Run and Attempt Updates

When a worker starts executing a task, it updates the Run record: sets status to RUNNING, records started_at, and stamps the current_worker_id. As execution progresses, the worker maintains its lease through heartbeats.

When execution completes, the worker creates an Attempt record capturing the outcome:

exit_code: 0 for success, non-zero for failureexit_reason: structured classification (USER_ERROR, TIMEOUT, INFRA_FAILURE, etc.)failure_type: USER or SYSTEM (determines retry budget and alerting — covered in the deep dive)logs_ref: pointer to full execution logs in object storage

If the attempt failed and the run's retry policy allows more attempts, the worker (or scheduler) re-enqueues the task with an appropriate backoff delay. The Run's attempt_count increments.

If all attempts are exhausted, the Run is marked FAILED. The job's next_run_at still advances for cron jobs — a failed run doesn't block future scheduled runs.

Step 2: Status Query Service

The Status Service provides read access to job definitions and execution history. It reads from the Run/Attempt Store (which may be a read replica of the Job Database, or a separate optimized store for high-volume queries).

Key queries:

GET /jobs/{id}: Returns the job definition plus its latest run status. One-stop view for "is this job healthy?"GET /jobs/{id}/runs?status=FAILED&from=&to=: Lists runs with filters. Pagination via cursor for large result sets.GET /runs/{run_id}: Detailed view of a specific run including all attempts.

For hot run data (last 7 days), queries hit the primary store. For cold history (older than 7 days), queries route to an archive store. This keeps the primary store small and fast.

Step 3: Notifications

Beyond pull-based queries, clients often want push notifications: "Tell me when my job fails." The Status Service emits events on run state transitions. Clients can subscribe to these events via webhooks or an internal event bus for integration with alerting systems.

The architecture adds two components. The Run/Attempt Store receives execution results from workers and stores structured run and attempt records. The Status Service provides query APIs for clients to check job health and run history. Workers now report heartbeats to the Scheduler and write results to the Run/Attempt Store. All previous components remain unchanged.

4. Access Control & Auditing

The system enforces multi-tenant isolation with read/write/admin permission levels. All control-plane actions are logged in an immutable audit trail. Secrets are managed through external vault references.

So far we've built job management, scheduling, dispatch, and status tracking. But there's a critical piece missing: who is allowed to do what? A scheduler is both a control plane (defining what code runs) and an execution plane (running that code with access to data and APIs). Without access control, any user can create a job that executes arbitrary code, exfiltrate data through job payloads, or cancel another team's critical jobs.

The Problem

Consider a multi-tenant SaaS platform. Team A should be able to define and monitor their own jobs but shouldn't see Team B's job payloads (which might contain sensitive configuration). An oncall engineer should be able to pause a runaway job but shouldn't be able to modify job definitions in production. A CI/CD service principal should be able to trigger runs but not change schedules.

These are different permission levels applied to different principals on different resources. We need a structured access control model.

Step 1: RBAC with Namespace Scoping

We define three roles with ascending permissions:

- Reader: View job definitions and run history. Cannot modify anything.

- Editor: Everything Reader can do, plus create/update/delete jobs and trigger manual runs.

- Admin: Everything Editor can do, plus manage ACLs, pause queues, change priorities, and replay failed runs.

Roles are assigned at two levels:

- Namespace/queue level: "Team A has Editor on the

analyticsqueue" — applies to all jobs in that queue. - Job level: "User X has Admin on

job_abc123" — fine-grained override for specific jobs.

Job-level grants override namespace grants. The most permissive applicable role wins.

Step 2: Enforcement

The Auth/ACL Service sits behind the API Gateway. Every API request is intercepted:

- Extract the caller's identity (API key, JWT token, service principal).

- Look up the caller's roles for the target resource (job or namespace).

- Check if the role permits the requested action.

- If denied, return 403 Forbidden. If allowed, forward to the service.

Authorization checks are cached locally (with short TTL) to avoid calling the ACL service on every request. ACL updates invalidate the cache.

Step 3: Audit Logging

Every control-plane action produces an immutable audit log entry:

- Who: principal identity

- What: action performed (create_job, update_schedule, trigger_run, cancel_run, modify_acl)

- When: timestamp

- Target: resource identifier

- Before/After: field-level diff for updates

Audit logs are append-only and stored separately from operational data. They're essential for security investigations ("Who changed the executable for job X last Tuesday?") and compliance.

Step 4: Credential Management

Jobs often need credentials to access databases, APIs, or cloud resources. These secrets must never appear in the job payload or definition.

Instead, jobs reference secrets through opaque handles: secret://vault/db-credentials/prod. At execution time, the worker fetches the actual credential from an external secret vault and injects it into the job's environment. The credential is scoped (only accessible to this specific job) and short-lived (TTL expires after the run completes).

This limits blast radius: a compromised job store doesn't leak credentials. Secret rotation happens in the vault without updating job definitions.

The architecture adds three components. The Auth/ACL Service intercepts all API requests at the gateway to enforce role-based permissions. The Audit Log captures immutable records of every control-plane action. The Secret Vault stores credentials externally, with workers fetching scoped, short-lived credentials at execution time. All previous components remain unchanged.

Deep Dive Questions

How do you schedule due jobs reliably at scale without missing jobs or creating hotspots?

It's midnight UTC. 8,000 cron jobs have schedule_expr = "0 0 * * *". Their next_run_at values all point to 00:00:00. A single scheduler instance runs SELECT * FROM jobs WHERE next_run_at <= NOW() and gets 8,000 rows. It must create run records, enqueue tasks, and update next_run_at for each — all before the 00:00:05 bucket closes. This is the "midnight problem."

Approach: DB-Indexed Scan with Time Buckets

The Job Database is the source of truth. The (status, next_run_at) index makes due-job queries efficient. But we don't query all due jobs at once — we advance through time buckets.

Each scheduler tick processes one bucket (say 5 seconds wide):

- Query:

SELECT ... WHERE status = 'ACTIVE' AND next_run_at BETWEEN :bucket_start AND :bucket_end ORDER BY priority DESC LIMIT 1000 - For each job in the result: create a Run record, enqueue to the task queue, update

next_run_atto the next cron tick. - If the result had 1,000 rows (hit the limit), there are more jobs in this bucket — process another page before advancing.

- Advance to the next bucket.

The LIMIT clause bounds work per query. Pagination handles hot buckets. The 5-second bucket width means even if processing takes a few seconds, we stay within the cron jitter SLA.

Distributed Coordination

A single scheduler is a single point of failure. We need multiple scheduler instances for availability. But if two schedulers process the same bucket, they'd dispatch the same job twice.

This is the same distributed consumer coordination problem covered in Distributed Message Queue. Each scheduler instance owns a subset of the time-bucket space (or job partitions). We use a coordination service to assign partitions to schedulers, with fencing tokens to prevent stale schedulers from dispatching after losing leadership.

Partitioning strategy: hash job_id into N partitions. Each scheduler instance owns a subset of partitions. A scheduler only processes due jobs whose job_id hashes to its assigned partitions. If a scheduler fails, its partitions are redistributed to surviving instances.

Cron Overlap Policy

When a cron job's previous run is still executing at the next scheduled tick, the system consults the job's overlap_policy:

- SKIP (default): Don't create a new run. Update

next_run_atto the tick after. This prevents runaway accumulation — a slow job doesn't cause an ever-growing backlog. - QUEUE: Create the run with status

WAITING. When the previous run completes, the scheduler promotesWAITINGruns toQUEUED. - ALLOW: Create and dispatch the run immediately. Both runs execute concurrently. Use for jobs that are safe to overlap (e.g., independent data partition processing).

Dispatch and Worker Protocol

Between the scheduler enqueuing a task and the worker executing it, the dispatch layer handles:

- At-least-once delivery: The task queue guarantees each message is delivered at least once. Workers must be idempotent (per the out-of-scope assumption).

- Lease protocol: When a worker pulls a task, it acquires a time-limited lease (say 5 minutes). The worker sends heartbeats every 30 seconds to extend the lease. If heartbeats stop, the lease expires and the task becomes available for another worker.

- Dead-letter handling: If a task fails repeatedly (exceeds the queue's own retry limit, distinct from the job's retry policy), it moves to a dead-letter queue for manual investigation. This prevents poison messages from blocking the queue.

Grasping the building blocks ("the lego pieces")

This part of the guide will focus on the various components that are often used to construct a system (the building blocks), and the design templates that provide a framework for structuring these blocks.

Core Building blocks

At the bare minimum you should know the core building blocks of system design

- Scaling stateless services with load balancing

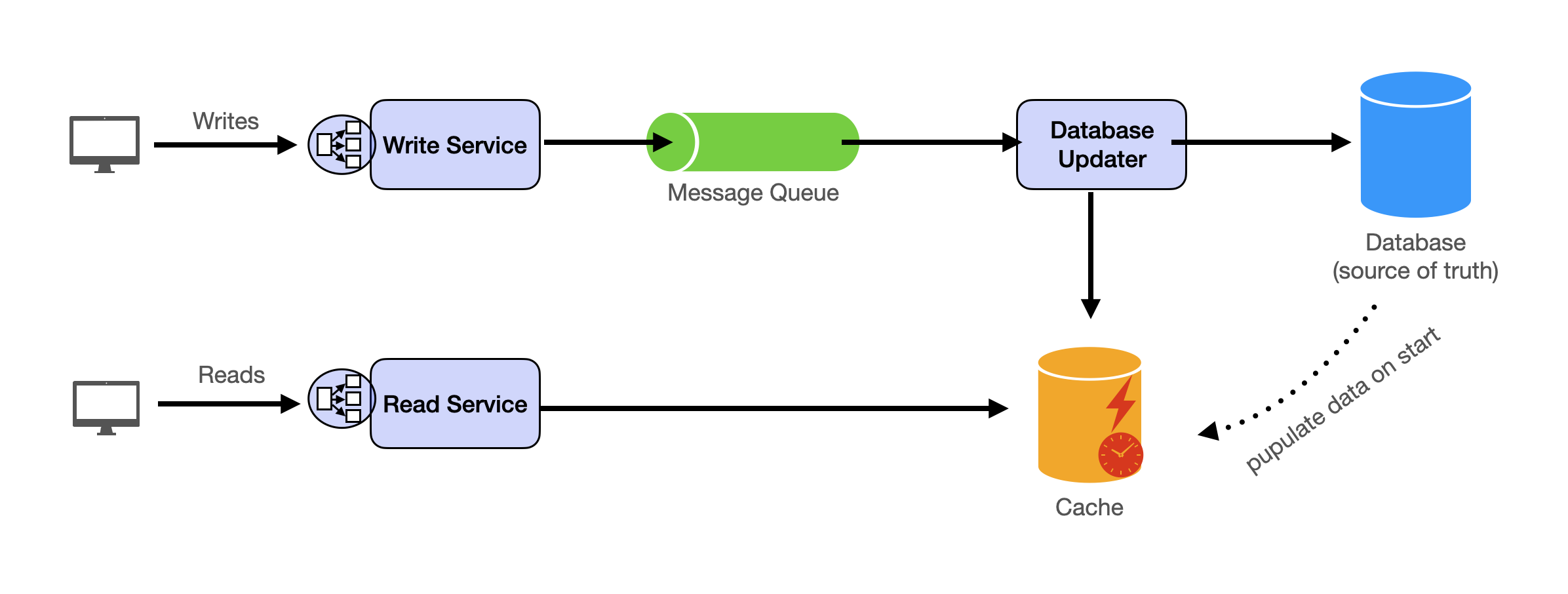

- Scaling database reads with replication and caching

- Scaling database writes with partition (aka sharding)

- Scaling data flow with message queues

System Design Template

With these building blocks, you will be able to apply our template to solve many system design problems. We will dive into the details in the Design Template section. Here’s a sneak peak:

Additional Building Blocks

Additionally, you will want to understand these concepts

- Processing large amount of data (aka “big data”) with batch and stream processing

- Particularly useful for solving data-intensive problems such as designing an analytics app

- Achieving consistency across services using distribution transaction or event sourcing

- Particularly useful for solving problems that require strict transactions such as designing financial apps

- Full text search: full-text index

- Storing data for the long term: data warehousing

On top of these, there are ad hoc knowledge you would want to know tailored to certain problems. For example, geohashing for designing location-based services like Yelp or Uber, operational transform to solve problems like designing Google Doc. You can learn these these on a case-by-case basis. System design interviews are supposed to test your general design skills and not specific knowledge.

Working through problems and building solutions using the building blocks

Finally, we have a series of practical problems for you to work through. You can find the problem in /problems. This hands-on practice will not only help you apply the principles learned but will also enhance your understanding of how to use the building blocks to construct effective solutions. The list of questions grow. We are actively adding more questions to the list.